Lazy rule writing causes cybersecurity incidents, not keyboard smashing hackers

There’s a popular story we tell ourselves about breaches: a determined attacker, chaining exploits, pounding away until something gives.

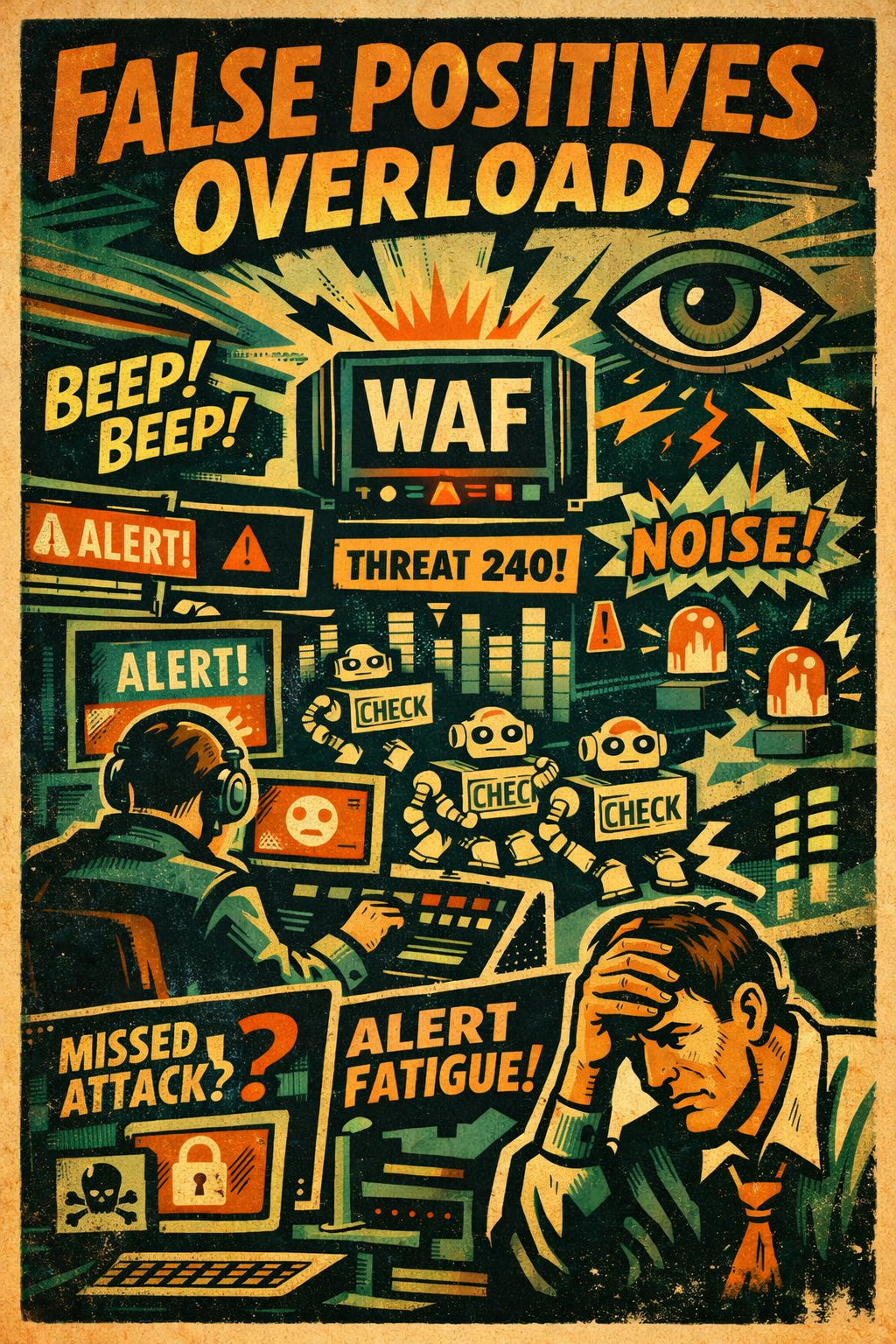

In a lot of real world incidents, the “break-in” doesn’t start with brilliance. It starts with noise. Specifically, a noisy detection rule that turns your SOC into a factory that processes alerts instead of risk.

Picture this

Your security team is on the clock 24/7. The client has just rolled out a Web Application Firewall (WAF). Your monitoring stack leans on IDS/IPS to block what it can and alert on what it can’t.

Then the console lights up.

A steady stream: 100 alerts an hour.

“Web Application: Violation Detected.”

At first, it looks like the WAF is doing its job. The problem is it isn’t. The “violations” are routine health checks, an uptime monitor hitting /health like it has done a thousand times before. The WAF rule is written in a way that treats boring automation as hostile behavior, and it’s doing it relentlessly.

That’s how you end up with a security team spending its best hours chasing the security equivalent of shadows on the wall.

What’s wrong with the rule

1) Static threat scoring with no context

The rule drags around a historical threat score, say, a “history threat weight” of 240 and uses it like a blunt instrument. Meanwhile the current request has a threat weight of 0.

That’s not detection. That’s a grudge.

If the rule can’t distinguish between “this source was suspicious last week” and “this request is suspicious right now,” it’s not measuring risk. It’s generating work.

2) No separation between historical suspicion and real-time behavior

A lot of bad rules treat history like destiny. An IP that once triggered something gets branded, and every subsequent request becomes “evidence.”

The result is predictable: the system keeps yelling even when nothing is happening, and everyone learns to tune it out.

3) Overbroad logic that flags “unknown” as “malicious”

This is the classic anti-pattern: if it’s not verified, it must be bad. If it’s repeated, it must be probing. If it’s automated, it must be an attacker.

That’s not threat detection. That’s paranoia turned into automation.

4) Automated traffic becomes an alert printer

Health checks are designed to be repetitive. They run every minute, sometimes every few seconds. So the same bot hits /health, and the same rule triggers, and the same alert fires.

Now you’re not dealing with a few false positives. You’re dealing with a system that manufactures them at scale.

5) No allowlist for trusted services

A mature setup makes room for known-good automation: uptime monitors, internal probes, synthetic transactions, trusted API clients.

This rule treats all of it as suspect and escalates it as incident-grade activity. Which means the SOC burns time triaging “attacks” that are really just the client’s own monitoring doing its job.

The consequence

This is the part that gets missed in postmortems because it doesn’t look dramatic.

The team sees hundreds of “violations” and does what humans always do: they adapt. They start skimming. They start batching. They start assuming the next one is the same as the last one.

Alert fatigue isn’t laziness. It’s a survival mechanism.

And then the real incident happens on a different client, a different alert stream, a different day. Except the team is already trained to distrust what the tools are telling them.

A real incident is missed or delayed.

The client isn’t informed in time.

A compromised machine stays exposed longer than it should, and the damage spreads.

All because a bad rule elsewhere taught everyone the same lesson: most of these alerts are nonsense.

That’s the risk. Not just false positives. False confidence.

How to fix the alert mismanagement

You don’t fix this by telling analysts to “be more vigilant.” You fix it by making the system stop lying.

1) Decay old threat scores over time

History has value, but it shouldn’t be a life sentence. Age out old suspicion unless it’s reinforced by current behavior. Otherwise you’re just punishing yesterday’s traffic forever.

2) Allowlist trusted health checks and known good automation

If you know the monitor, treat it like a monitor. Don’t page the SOC because a bot did the job you hired it to do.

3) Use matching logic that reflects intent

If you’re going to alert, alert on something that looks like an attack:

unusual paths (

/admin,/login, parameter heavy endpoints)unusual methods (verbs you don’t expect)

suspicious payload patterns

header anomalies that don’t match the “known good” client

request rates that look like scanning, not monitoring

4) Suppress duplicates for known safe traffic

If the same benign signature repeats, summarize it instead of screaming about it all day. A dashboard counter beats a thousand identical alerts.

5) Verify bots instead of guessing

“Bot” is not a synonym for “bad.” Validate known services where possible, and reserve escalation for traffic that fails verification or behaves inconsistently.

6) Talk to the SOC lead and commit to changes

This is the part most teams skip: ownership. Rules don’t tune themselves. If the SOC is drowning, the detection strategy has to change quickly, with someone accountable for outcomes.

Detection is only useful if humans can respond to it.

Bottom line

Bad rule writing doesn’t just waste time. It creates the conditions where real incidents get missed.

The attacker doesn’t need to outsmart your controls. They just need to wait until your controls have trained your people to ignore them.

That’s not Hollywood.

That’s operations.